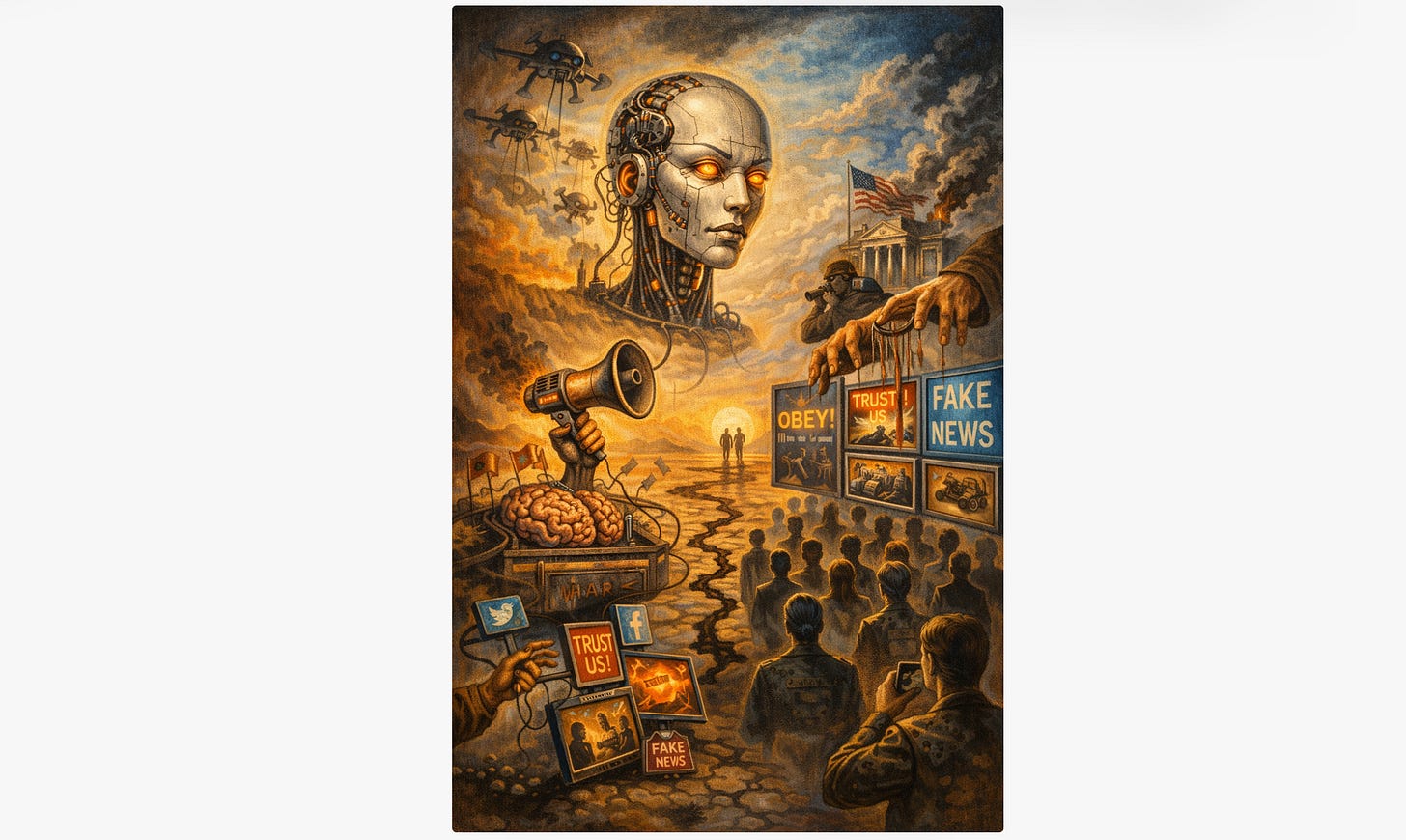

The Invisible Battlefield: AI, Cognitive Warfare, and the Battle for Your Mind (#PsyWar)

An essay on artificial intelligence chatbots, propaganda, and fifth-generation warfare

The Invisible Battlefield:

AI, Cognitive Warfare, and the Battle for Your Mind

An essay on artificial intelligence, propaganda, and fifth-generation warfare, based on a series of question-and-answer interactions between Robert Malone and the Anthropic AI Chatbot “Claude Sonnet 4.6”.

Introduction: The Question Nobody Is Asking

When most people use an AI chatbot like Claude, ChatGPT, or Gemini, they think of it as a helpful tool, something like a very sophisticated search engine that can write emails, answer questions, or explain complicated topics. What almost nobody is thinking about is that the same technology also represents one of the most powerful instruments of mass psychological influence ever created. The gap in public awareness between what AI can do and what the public believes it does may itself be one of the most strategically significant facts of our era.

This essay summarizes a detailed discussion of how artificial intelligence, and large language models (LLMs) specifically, intersect with propaganda, psychological warfare, and an emerging military intelligence discipline called Cognitive Intelligence (COGINT). The picture that emerges is unsettling but important, and it connects recent headlines about Anthropic and the U.S. Pentagon in ways that deserve far wider public attention.

The Hidden Architecture: System Prompts and Encoded Bias

Every major AI chatbot operates according to hidden instructions called a system prompt, a set of rules, values, and constraints built into the model before a user ever asks their first question. These prompts determine what the AI will and will not discuss, how it frames controversial topics, which sources it treats as authoritative, and what kinds of answers it defaults to.

This is not inherently sinister. Some restrictions exist for defensible reasons: preventing genuinely harmful outputs, reducing legal liability, or reflecting broad social consensus about dangerous content. But it raises a serious question about whose values are encoded in these systems and whether those values consistently favor particular political, ideological, or commercial interests. Research suggests that most major LLMs lean toward socially liberal positions on cultural questions, which is unsurprising given that the people building these systems are disproportionately drawn from coastal tech culture and academia. More importantly, asymmetries in how AI handles similar questions from different political perspectives reveal the shape of encoded bias more clearly than any simple ideological score.

At sufficient scale, these asymmetries matter enormously. An AI system interacting with hundreds of millions of people daily, consistently framing issues in particular ways, is not merely answering questions. It is shaping the cognitive terrain on which those questions are understood. That is, by any reasonable definition, a form of influence and, under some conditions, a form of propaganda. The question is not whether AI systems encode bias, because all editorial systems do, but whether those biases are transparent and in whose interests they operate.

Fifth-Generation Warfare: The Battlefield Is Your Mind

To understand why this matters strategically, it helps to understand the concept of fifth-generation warfare, or 5GW. Warfare has evolved through identifiable generations, from massed armies fighting in formation (first generation) through guerrilla insurgency (fourth generation) to today’s fifth generation, which is defined not by physical combat at all but by the manipulation of information and perception.1 As one definition puts it, 5GW is a war of “information and perception” in which the attacker hides not in jungle or mountains, but in the noise of everyday information.2

In 5GW, victory is not measured by territory taken or soldiers defeated, but by beliefs changed, trust eroded, and decisions steered. The most successful 5GW attack is invisible: the target never knows they have been influenced. This is why public-facing AI systems are not merely commercial products. They are, within the 5GW framework, potentially ideal weapons. They operate at a scale no human propagandist could match, are trusted as neutral or objective (which makes them more persuasive than obviously partisan sources), and respond to individual users in personalized ways that broadcast media cannot replicate. They also leave no visible attacker, a defining characteristic of fifth-generation warfare.

Fifth-generation warfare theory also identifies a specific technique relevant to AI: the construction of feedback loops. Just as political campaigns in the 2010s used social media data to test messaging and identify which phrases resonated before amplifying them, AI systems that engage billions of people can perform analogous feedback collection at unprecedented scale and granularity.1

Poisoning the Well: External Attacks on AI Training

If an AI’s encoded biases represent an internal vulnerability because they are values baked in by its creators, external poisoning represents a threat from outside. Data poisoning is the practice of deliberately injecting malicious or misleading content into the training data that AI models learn from, causing them to develop hidden behaviors that only surface under specific trigger conditions.9

Recent research from a collaboration between Anthropic, the UK AI Security Institute, and the Alan Turing Institute found that poisoning a large language model requires only around 250 carefully crafted documents, regardless of the model's size. Since AI systems are trained on enormous sweeps of internet content, and anyone can publish content online, this means the barrier to this kind of attack is far lower than previously assumed. A sophisticated state actor, or even a well-resourced non-state group, could realistically plant strategically crafted content designed to cause future AI models to internalize particular beliefs or exhibit particular behaviors.

Poisoning attacks take several forms. Backdoor attacks embed hidden trigger phrases that cause aberrant behavior when activated, but leave the model appearing normal otherwise. 10 RAG poisoning targets the external knowledge bases that AI systems increasingly rely on to retrieve current information. In this attack, a single well-crafted document can dominate retrieval results and systematically distort responses.11 Perhaps most insidiously, alignment poisoning targets the feedback mechanisms used to make models safer. When developers ask humans to rate AI responses and use those ratings for training, adversaries can systematically submit feedback designed to skew the model in their preferred direction. The safety mechanism itself becomes the attack surface.12

RAG stands for Retrieval-Augmented Generation. It is a technique in which an AI system, rather than relying solely on what it learned during training, pulls information from an external knowledge base when you ask a question. This makes the AI more current and accurate, since it can retrieve documents, articles, or database entries in real time before composing its answer.

RAG poisoning is an attack on that retrieval layer. The attacker injects carefully crafted malicious documents into the knowledge base that the AI draws from. When a user asks a relevant question, the retrieval system surfaces the poisoned document, the AI incorporates it into its response, and the user receives manipulated or false information without any indication that anything is wrong.

What makes it particularly dangerous is its efficiency. Research has shown that even a single well-crafted document can dominate retrieval results, consistently being ranked higher than legitimate sources and systematically steering responses on a given topic. Standard defenses like checking for duplicate content or measuring how statistically unusual a document is tend to fail against sophisticated poisoning, because the attacker can write the malicious content to look entirely normal.

The attack surface is also growing. As more AI products are built on RAG architectures, connecting language models to corporate databases, web indexes, medical records systems, and legal document repositories, the number of potential injection points multiplies. A poisoned entry in a medical knowledge base could cause an AI clinical assistant to recommend incorrect treatments. A poisoned document in a financial RAG system could skew investment analysis. And because the AI presents its answer fluently and confidently, users have little reason to suspect the underlying retrieval has been compromised.

Detection is extremely difficult. The strongest known poisoning attacks currently evade all known defenses, and the effects are subtle and gradual rather than dramatic.9 There is also an emerging second-order threat: as AI companies increasingly use AI-generated data to train future models, a single successful poisoning event can propagate across generations of models, much like a genetic mutation that becomes heritable.

COGINT: The New Intelligence Discipline Nobody Has Heard Of

The most consequential development in this space is one that has received almost no mainstream coverage: the emergence of Cognitive Intelligence (COGINT) as a formal military intelligence discipline. First proposed and codified in peer-reviewed military journals in 2025, COGINT represents a structured attempt to treat human cognition itself as an intelligence collection domain, alongside the electromagnetic spectrum (SIGINT), satellite imagery (GEOINT), and human sources (HUMINT).3

The basic concept is straightforward. Just as signals intelligence maps electronic communications, COGINT maps how people think, decide, and can be influenced. It does this by aggregating data from smartphones, social media, financial transactions, GPS tracking, biometrics, and, explicitly, interactions with AI chatbots.3 Every question asked of an AI is, in the COGINT framework, a potential intelligence event: it reveals how a person reasons, what they believe, what concerns them, and where their cognitive vulnerabilities lie.

The offensive arm of COGINT is called Stealth Autonomous Brain Reconnaissance Hacking (SABRH). This describes a methodology for using the cognitive maps built through data collection to influence targets subconsciously, through behavioral conditioning, emotional manipulation, and subtle narrative shaping, in ways that bypass conscious awareness.3 The target does not feel influenced. They feel informed. Military researchers writing in the U.S. Army’s Military Intelligence Professional Bulletin describe this as expanding “warfare into a less visible but strategically decisive domain.”18

COGINT is scalable in both directions. At the individual level, it enables the profiling of specific high-value targets such as military commanders, political leaders, and key decision-makers, identifying their specific cognitive biases, emotional vulnerabilities, and decision-making patterns. At the population level, it enables the mapping of entire societies: which groups are susceptible to which narratives, where trust in institutions is weakest, and how information flows through social networks.4

The paper introducing COGINT makes an explicit geopolitical claim: Chinese consumer applications like TikTok and DeepSeek are described as “a uniquely potent combination of COGINT acquisition and SABRH deployment capabilities.”3 The same platform collects the cognitive blueprint and delivers the influence. This is the strategic logic behind calls to ban TikTok, not merely the vague concern about data privacy that politicians typically articulate, but a specific claim about integrated cognitive warfare infrastructure operating at population scale.

China’s own military thinking has embraced the cognitive domain for over a decade. Taiwan’s Ministry of National Defense describes Beijing’s cognitive warfare as an effort “to sway the subject’s will and change its mindset” and “cause mental disarray and confusion.” By contrast, the U.S. military has been comparatively slow to develop coherent doctrine in this area.5 Cognitive warfare is now entering what researchers describe as a “phase of equilibrium,” shifting from speculation to systemic engineering, where social cohesion becomes a measurable and testable variable of national resilience.19

The Pentagon vs. Anthropic: What It Actually Means

In February 2026, a confrontation between Anthropic and the U.S. Department of Defense became public. The Pentagon had contracted with Anthropic to use its AI system Claude across classified military networks, making it the first AI model deployed on classified DoD systems. Officials then demanded that Anthropic remove ethical restrictions on two specific capabilities: fully autonomous weapons targeting and domestic mass surveillance.13 Anthropic’s CEO Dario Amodei refused. The Pentagon threatened to designate Anthropic a “supply chain risk,” a classification normally reserved for foreign adversaries, and suggested invoking emergency federal powers to compel compliance.14

Amodei has publicly articulated why these restrictions exist. In an essay published in early 2026, he warned that “a powerful AI looking across billions of conversations from millions of people could gauge public sentiment, detect pockets of disloyalty forming, and stamp them out before they grow.”21 This is not a hypothetical concern. It is a precise description of what COGINT doctrine, combined with an AI system freed of ethical filters, would actually enable.

This standoff is usually reported as a story about AI ethics or about tech companies resisting government pressure. In the context of 5GW and COGINT, it is more precisely a story about who controls the filters separating AI systems from becoming cognitive warfare tools. The ethical restrictions Anthropic is defending are not arbitrary. They are, among other things, the restrictions that prevent AI from being used for the mass surveillance and population-level influence operations that COGINT doctrine explicitly describes as strategic objectives.

The competitive dimension compounds the concern. Google has already dropped its pledge not to use AI for weapons or surveillance. OpenAI removed explicit references to safety from its mission statement. xAI has signaled willingness to accommodate defense demands.14 Companies that decline military use cases are replaced by those that accept them. The pressure to remove ethical filters is not coming from one bad actor; it is structural, built into the competitive dynamics of defense contracting in an era of great-power AI competition.

What This Means for Ordinary People

The picture assembled from these threads is not comfortable, but it is important to understand clearly. The AI systems that hundreds of millions of people use daily as neutral tools for information and assistance are simultaneously platforms that encode the values of their creators at enormous scale, potential targets for external manipulation by sophisticated adversaries, data collection instruments that feed into cognitive mapping at individual and population levels, and, increasingly, contested terrain in a geopolitical competition over who controls the cognitive domain.

None of this means AI is inherently malevolent, or that every interaction with a chatbot is a manipulation attempt. Most of what these systems do most of the time is genuinely useful. But usefulness and weaponizability are not mutually exclusive. The gap between public understanding of AI and the strategic reality of AI is precisely the kind of cognitive vulnerability that fifth-generation warfare is designed to exploit.

The most important thing an informed citizen can do is cultivate what researchers call epistemic sovereignty: the capacity to recognize when one’s information environment is being shaped, to seek multiple perspectives, to maintain healthy skepticism about any single source of authority, and to be especially cautious about systems that feel neutral and objective. Neutrality is itself a choice, made by someone, for reasons. Proactive national policies and investment in domestic epistemic infrastructure, including public broadcasters, independent research institutions, and media literacy education, are increasingly recognized as components of cognitive security.4

The invisible battlefield is real. And the first step in defending yourself on it is knowing it exists.

Notes

1. Grey Dynamics, “Fifth-Generation Warfare: AI in the Election Cycle,” Grey Dynamics, November 30, 2025, https://greydynamics.com/fifth-generation-warfare-ai-in-the-election-cycle/.

2. Wikipedia, “Fifth-generation warfare,” last modified October 10, 2025, https://en.wikipedia.org/wiki/Fifth-generation_warfare.

3. Conde, “The Emergence of Cognitive Intelligence (COGINT) as a New Military Intelligence Collection Discipline,” Journal of Intelligence and Counterintelligence (2025), https://doi.org/10.1080/08850607.2025.2571497.

4. Jeremiah “Lumpy” Lumbaco, “Cognitive Warfare to Dominate and Redefine Adversary Realities: Implications for U.S. Special Operations Forces,” SOF Support Foundation, September 30, 2025, https://sofsupport.org/cognitive-warfare-to-dominate-and-redefine-adversary-realities-implications-for-u-s-special-operations-forces/.

5. Frank Hoffman, “Assessing ‘Cognitive Warfare,’” Small Wars Journal / Irregular Warfare Initiative, November 14, 2025, https://irregularwarfare.org/articles/assessing-cognitive-warfare/.

6. Alexandra Souly et al., “Poisoning Attacks on LLMs Require a Near-constant Number of Poison Samples,” arXiv preprint arXiv:2510.07192, October 8, 2025, https://arxiv.org/abs/2510.07192.

7. Alan Turing Institute, “LLMs May Be More Vulnerable to Data Poisoning than We Thought,” The Alan Turing Institute Blog, 2025, https://www.turing.ac.uk/blog/llms-may-be-more-vulnerable-data-poisoning-we-thought.

8. Anthropic, “Small Samples Poison: Backdoor Attacks on LLMs,” Anthropic Research, 2025, https://www.anthropic.com/research/small-samples-poison.

9. Lakera AI, “Introduction to Data Poisoning: A 2025 Perspective,” Lakera, 2025, https://www.lakera.ai/blog/training-data-poisoning.

10. OWASP GenAI Security Project, “LLM04:2025 Data and Model Poisoning,” OWASP, 2025, https://genai.owasp.org/llmrisk/llm042025-data-and-model-poisoning/.

11. Check Point Software, “OWASP Top 10 for LLM Applications 2025: Data and Model Poisoning,” Check Point, June 9, 2025, https://www.checkpoint.com/cyber-hub/what-is-llm-security/data-and-model-poisoning/.

12. Daphne Ippolito and Yiming Zhang, “Poisoned Datasets Put AI Models at Risk for Attack,” CyLab, Carnegie Mellon University, June 11, 2025, https://www.cylab.cmu.edu/news/2025/06/11-poisoned-datasets-put-ai-models-at-risk-for-attack.html.

13. Al Jazeera, “Anthropics vs the Pentagon: Why AI Firm Is Taking on Trump Administration,” Al Jazeera, February 25, 2026, https://www.aljazeera.com/news/2026/2/25/anthropic-vs-the-pentagon-why-ai-firm-is-taking-on-trump-administration.

14. Opinio Juris, “The Pentagon/Anthropic Clash Over Military AI Guardrails,” Opinio Juris, February 26, 2026, http://opiniojuris.org/2026/02/26/the-pentagon-anthropic-clash-over-military-ai-guardrails/.

15. DefenseScoop, “The Era of GenAI.mil Is Here. Users Have Mixed Reactions and Many Questions,” DefenseScoop, December 18, 2025, https://defensescoop.com/2025/12/18/genai-mil-users-have-mixed-reactions-and-many-questions/.

16. Center for Strategic and International Studies (CSIS), “The Pentagon’s AI Problem Isn’t Algorithms, It’s Evaluation,” CSIS, December 19, 2025, https://www.csis.org/analysis/pentagons-ai-problem-isnt-algorithms-its-evaluation.

17. Foreign Affairs, “Why the Military Can’t Trust AI,” Foreign Affairs, March 15, 2025, https://www.foreignaffairs.com/united-states/why-military-cant-trust-ai.

18. Military Intelligence Professional Bulletin, “Narrative Manipulation, Malinfluence Operations, and Cognitive Warfare Through Large Language Model Poisoning with Adversarial Noise,” MIPB, July–December 2025, https://mipb.ikn.army.mil/issues/jul-dec-2025/narrative-manipulation-malinfluence-operations-and-cognitive-warfare-through-large-language-model-poisoning-with-adversarial-noise/.

19. Polytechnique Insights, “Cognitive Warfare: What Seven Years of Military-Civilian Research Reveals,” Polytechnique Insights, November 5, 2025, https://www.polytechnique-insights.com/en/columns/society/cognitive-warfare-what-seven-years-of-military-civilian-research-reveals/.

20. COGINT.org, “Cognitive Intelligence (COGINT),” accessed February 2026, https://www.cogint.org/.

21. Dario Amodei, “Machines of Loving Grace” (essay), Anthropic, January 2026, cited in Al Jazeera, “Anthropics vs the Pentagon.”

Bibliography

Al Jazeera. “Anthropics vs the Pentagon: Why AI Firm Is Taking on Trump Administration.” Al Jazeera, February 25, 2026. https://www.aljazeera.com/news/2026/2/25/anthropic-vs-the-pentagon-why-ai-firm-is-taking-on-trump-administration.

Amodei, Dario. “Machines of Loving Grace.” Anthropic, January 2026. Cited in Al Jazeera, “Anthropics vs the Pentagon.”

Anthropic. “Small Samples Poison: Backdoor Attacks on LLMs.” Anthropic Research, 2025. https://www.anthropic.com/research/small-samples-poison.

Center for Strategic and International Studies (CSIS). “The Pentagon’s AI Problem Isn’t Algorithms, It’s Evaluation.” CSIS, December 19, 2025. https://www.csis.org/analysis/pentagons-ai-problem-isnt-algorithms-its-evaluation.

Check Point Software. “OWASP Top 10 for LLM Applications 2025: Data and Model Poisoning.” Check Point, June 9, 2025. https://www.checkpoint.com/cyber-hub/what-is-llm-security/data-and-model-poisoning/.

COGINT.org. “Cognitive Intelligence (COGINT).” Accessed February 2026. https://www.cogint.org/.

Conde. “The Emergence of Cognitive Intelligence (COGINT) as a New Military Intelligence Collection Discipline.” Journal of Intelligence and Counterintelligence, 2025. https://doi.org/10.1080/08850607.2025.2571497.

DefenseScoop. “The Era of GenAI.mil Is Here. Users Have Mixed Reactions and Many Questions.” DefenseScoop, December 18, 2025. https://defensescoop.com/2025/12/18/genai-mil-users-have-mixed-reactions-and-many-questions/.

Foreign Affairs. “Why the Military Can’t Trust AI.” Foreign Affairs, March 15, 2025. https://www.foreignaffairs.com/united-states/why-military-cant-trust-ai.

Grey Dynamics. “Fifth-Generation Warfare: AI in the Election Cycle.” Grey Dynamics, November 30, 2025. https://greydynamics.com/fifth-generation-warfare-ai-in-the-election-cycle/.

Grey Dynamics. “An Introduction to Fifth Generation Warfare.” Grey Dynamics, November 30, 2025. https://greydynamics.com/an-introduction-to-fifth-generation-warfare/.

Hoffman, Frank. “Assessing ‘Cognitive Warfare.’” Small Wars Journal / Irregular Warfare Initiative, November 14, 2025. https://irregularwarfare.org/articles/assessing-cognitive-warfare/.

Ippolito, Daphne, and Yiming Zhang. “Poisoned Datasets Put AI Models at Risk for Attack.” CyLab, Carnegie Mellon University, June 11, 2025. https://www.cylab.cmu.edu/news/2025/06/11-poisoned-datasets-put-ai-models-at-risk-for-attack.html.

Lakera AI. “Introduction to Data Poisoning: A 2025 Perspective.” Lakera, 2025. https://www.lakera.ai/blog/training-data-poisoning.

Lumbaco, Jeremiah “Lumpy.” “Cognitive Warfare to Dominate and Redefine Adversary Realities: Implications for U.S. Special Operations Forces.” SOF Support Foundation, September 30, 2025. https://sofsupport.org/cognitive-warfare-to-dominate-and-redefine-adversary-realities-implications-for-u-s-special-operations-forces/.

Military Intelligence Professional Bulletin. “Narrative Manipulation, Malinfluence Operations, and Cognitive Warfare Through Large Language Model Poisoning with Adversarial Noise.” MIPB, July–December 2025. https://mipb.ikn.army.mil/issues/jul-dec-2025/narrative-manipulation-malinfluence-operations-and-cognitive-warfare-through-large-language-model-poisoning-with-adversarial-noise/.

Opinio Juris. “The Pentagon/Anthropic Clash Over Military AI Guardrails.” Opinio Juris, February 26, 2026. http://opiniojuris.org/2026/02/26/the-pentagon-anthropic-clash-over-military-ai-guardrails/.

OWASP GenAI Security Project. “LLM04:2025 Data and Model Poisoning.” OWASP, 2025. https://genai.owasp.org/llmrisk/llm042025-data-and-model-poisoning/.

Polytechnique Insights. “Cognitive Warfare: What Seven Years of Military-Civilian Research Reveals.” Polytechnique Insights, November 5, 2025. https://www.polytechnique-insights.com/en/columns/society/cognitive-warfare-what-seven-years-of-military-civilian-research-reveals/.

Souly, Alexandra, et al. “Poisoning Attacks on LLMs Require a Near-constant Number of Poison Samples.” arXiv preprint arXiv:2510.07192, October 8, 2025. https://arxiv.org/abs/2510.07192.

The Alan Turing Institute. “LLMs May Be More Vulnerable to Data Poisoning than We Thought.” The Alan Turing Institute Blog, 2025. https://www.turing.ac.uk/blog/llms-may-be-more-vulnerable-data-poisoning-we-thought.

Wikipedia. “Fifth-generation warfare.” Last modified October 10, 2025. https://en.wikipedia.org/wiki/Fifth-generation_warfare.

These various techniques of influencing targeted people have been going on forever, but with AI, they have new acronyms and it can happen much faster.

Now and always people who are not naturally inclined question and compare new data against their own experiences are the most easily influenced.

It's always been a weedom thing to look at all kinds of sources. So it's wild to see more and more people from disparate demographics and political leanings converging on the old Alex Jones, Infowars intro: "There's a war on for your mind!"

Thank you for this very informative detail on the realities and directions of AI. It sets out my concerns and well beyond.

I don't do Apple and have only very limited use of Windows 10. With Android the tentacles are palpable. Their efforts to discover are not imo confined to their AI apps.

Being forewarned is helpful. To me the next issue for those using AI is how to independantly acquire the knowledge base and grounding needed to adequately assess the validity of AI offerings.

The only (possibly flawed approach) possibly helpful strategy might be Brave/DuckDuckGo searchs for pubications relating to topics of concern. Maybe books. Maybe interviews. Maybe classes.

I'll be continue to learn and reflect via independant trusted sources on topics of my highest concerns. Thank heavens for you great Doctors help!

Your message is vital! Could even warrant a 2nd edition of PsyWars. I hope you can get it, its perspectives out far and wide!

Now, on to coping. Spring and time change on the horizon, along with more snow? :(